AI Disclosure: This blog post was generated through a conversation with Claude Code. The research was AI-assisted. Here are the two prompts that drove it:

Prompt 1: “Let’s do some research. Who else is thinking and talking about the AI-assisted or AI-enabled Software Engineering Organisation of the future? Who are the thought-leaders? And what do they say? What are the top 10 thoughts, ideas, predictions? Make sure the research focuses on the last 3 months. It needs to be current.”

Prompt 2: “Let’s create a ‘The ASO - State of the Union’ blog post with these top 10 insights/findings. The insights/findings should be ~100 words and should feature a list of sources. Read some of the other blog posts to learn the style. Add pictures. Full disclosure: Put a statement at the top of the blog post to point out that the post was generated by AI and document the two prompts to explain where this is coming from.”

Back in February I wrote The Augmented Software Organization - Moving on, arguing that 2026 needs to be the year the industry stops bolting AI onto 2020 workflows and starts redesigning how software engineering organizations actually work. I proposed a 6-stage delivery pipeline and four new high-leverage roles.

So — who else is in this conversation? What does the discourse look like right now, in April 2026?

I asked Claude to do a sweep of the last three months. Here is what came back.

The Top 10 Insights (January–April 2026)

1. AI is a dysfunction amplifier, not a dysfunction fixer

The single strongest point of consensus across the entire discourse. The DORA 2025 findings put it plainly: “AI’s primary role is as an amplifier, magnifying an organization’s existing strengths and weaknesses.” John Cutler adds that interrupt-driven organizations will accelerate their chaos with AI tools. Markus Eisele: AI amplifies ambiguity — if architectural intent is implicit, the model fills the gap with plausible-but-wrong assumptions. This is the theoretical foundation of the ASO thesis: the tools have changed, the workflows have not, and the mismatch is the problem.

Sources: DORA 2025 Report; John Cutler, TBM 412 “Institutionalized Overload (Now With AI)”, March 27, 2026; Markus Eisele, O’Reilly Radar, April 8, 2026

2. Comprehension debt is the hidden organizational crisis

Code volume is increasing while human understanding of that codebase is decreasing — and this gap is invisible in all standard metrics. Addy Osmani (Google Chrome) coined the term “comprehension debt” and cited an Anthropic study of 52 engineers: passive AI users scored 17% lower on comprehension tests than non-AI-assisted peers (50% vs. 67%). Velocity metrics, DORA scores, and code coverage all look fine while the organization quietly loses grip on its own system. The organizational risk is not AI writing bad code — it is humans no longer being able to catch it.

Sources: Addy Osmani, “Comprehension Debt: The Hidden Cost of AI-Generated Code”, O’Reilly Radar, April 13, 2026

3. The scarcity equation has inverted: execution is cheap, judgment is scarce

A year ago, the bottleneck was skilled engineers who could write quality code fast. That bottleneck is gone. Ethan Mollick (Wharton): “Now the ’talent’ is abundant and cheap. What’s scarce is knowing what to ask for.” Tim O’Reilly agrees: AI makes engineering more demanding, not less, because it requires sophisticated judgment about when to trust AI output and when to override it. Both argue that expertise is becoming valuable not for execution but for recognizing quality, articulating precise requirements, and understanding when the generated answer is subtly wrong.

Sources: Ethan Mollick, “Management as AI Superpower”, OneUsefulThing, January 27, 2026; Tim O’Reilly, “The World Needs More Software Engineers”, O’Reilly Radar, April 7, 2026

4. Organizational governance infrastructure for AI agents does not exist yet

Tyler Akidau (O’Reilly) identifies the most dangerous gap in the current landscape: organizations have deployed agents as capabilities but have zero governance infrastructure to manage them. No agent identity management. No task-scoped authorization. No complete observability. No accountability chains. He draws a direct analogy to human resources: “We don’t try to make the humans perfect. We scope their access and actions, monitor their progress, and hold them accountable.” His historical pattern — “capability first, governance after, panic in between” — is exactly where the industry sits right now.

Sources: Tyler Akidau, “Posthuman: We All Built Agents. Nobody Built HR.”, O’Reilly Radar, April 8, 2026

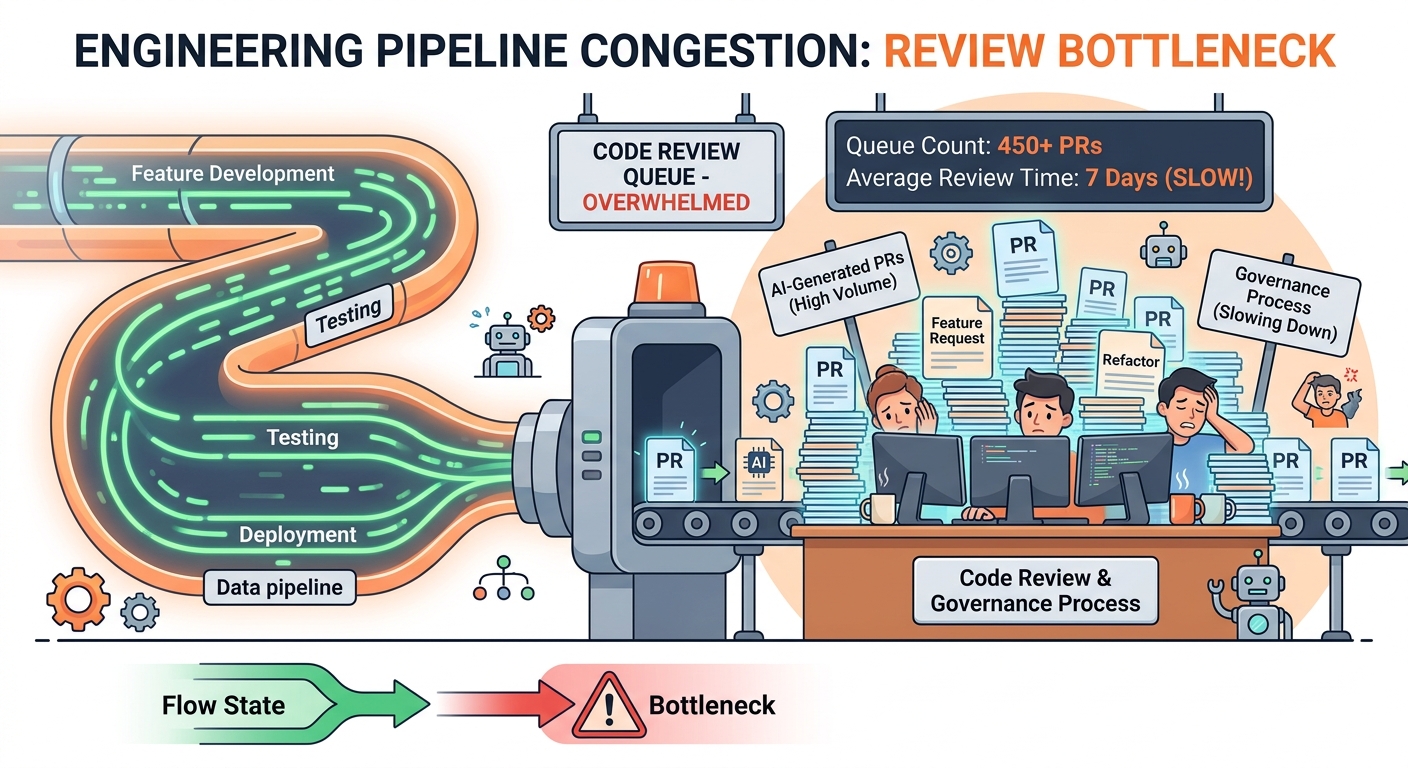

5. The workflow bottleneck has shifted from coding to review and governance

The Pragmatic Engineer’s March 2026 survey (900+ engineers) found that the constraint is no longer AI speed — it is human review capacity. Engineers are hitting usage limits, not because the AI is slow, but because humans cannot review output fast enough. The New Stack documented open source maintainers “drowning in AI-generated pull requests” as a preview of what enterprise teams face next. Neal Ford and Mark Richards argue architecture must be expressed as machine-executable code, because the review bottleneck cannot be solved by humans reading more documentation. Ankit Jain (Latent Space) goes further: routing AI-generated code through an AI reviewer doesn’t resolve the structural problem — “when agents write code, ‘fresh eyes’ is just another agent with the same blind spots.” His alternative is a five-layer trust model replacing review entirely: competing agents generate solutions, deterministic guardrails replace subjective judgment, humans author acceptance criteria upfront, and adversarial agents red-team the output. The shift: “Humans should review specs, plans, constraints, and acceptance criteria — not 500-line diffs.”

Sources: Gergely Orosz, Pragmatic Engineer Survey, March 2026; Jennifer Riggins, The New Stack, April 9, 2026; Neal Ford and Mark Richards, O’Reilly Radar, April 9, 2026; Ankit Jain, “How to Kill the Code Review”, Latent Space, March 2, 2026

6. The three-tier workforce split: juniors amplified, seniors empowered, middle tier at risk

Kent Beck and Martin Fowler (via Pragmatic Engineer) predict a three-tier split mirroring the Dotcom crash: AI will amplify junior developers, make experienced practitioners more effective, and displace the middle tier. Beck calls this “the golden age of the junior programmer.” DHH found the opposite at Amazon: senior engineers gain far more from AI than juniors, and Amazon now requires senior sign-off before junior-AI-generated code ships to production. Both agree on the middle tier’s exposure. Both also warn that the “Agile industrial complex” pattern is repeating: vendors overselling, actual implementation nuanced and uncertain.

Sources: Kent Beck and Martin Fowler, Pragmatic Engineer “Cycles of Disruption in the Tech Industry”, April 7, 2026; DHH, Pragmatic Engineer “DHH’s New Way of Writing Code”, April 8, 2026

7. “Re-soloing” is quietly dismantling the team knowledge infrastructure

Kent Beck describes a pattern he calls “re-soloing”: programmers now manage multiple AI agents individually rather than working in teams, which differs fundamentally from pair programming’s social and knowledge-transfer benefits. The organizational implication is significant. The knowledge-sharing infrastructure of team-based development — code reviews, pair programming, architectural discussions — is being quietly dismantled as individual developers become more productive with their own agent fleet. This creates systemic organizational knowledge risk that is completely invisible in output metrics. Organizations may be trading long-term system understanding for short-term velocity.

Sources: Kent Beck, Pragmatic Engineer “Cycles of Disruption in the Tech Industry”, April 7, 2026

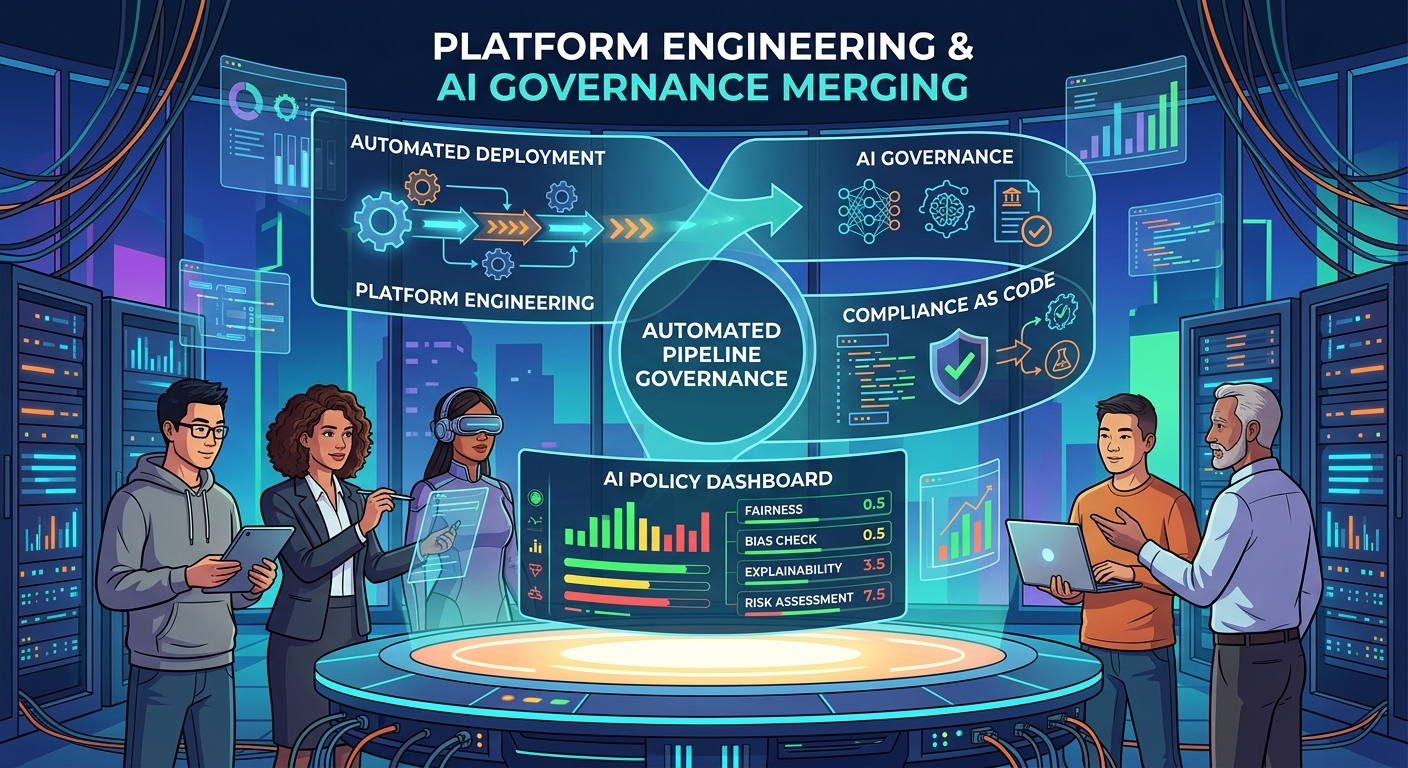

8. Platform engineering and AI governance are converging into a new discipline

The InfoQ article “Architectural Governance at AI Speed” (March 2026) argues that automated, pipeline-embedded governance has replaced review boards. The new principle: “The conformant path should be the easiest path.” Jennifer Riggins (The New Stack) documented Spotify handling 1,500+ AI-generated PRs with 47% faster support resolution — this is the operational preview of what enterprise teams face next. Sam Bhagwat (InfoQ) argues existing organizational structures simply do not map to AI project requirements, and proposes cross-functional Tiger Teams as the primary unit.

Sources: Howard et al., “Architectural Governance at AI Speed”, InfoQ, March 26, 2026; Jennifer Riggins, The New Stack, January 2, 2026; Sam Bhagwat, InfoQ podcast “Tiger Teams, Evals and Agents”, 2026

9. Context is infrastructure — and most organizations have none

Multiple independent lines of thought have converged on this: organizational knowledge — team standards, architectural decisions, problem context — must be treated as versioned, maintained engineering artifacts for AI to function well at scale. Tim O’Reilly: agents arrive as “new employees with zero context,” and 99% of knowledge work lacks proper context documentation. Rahul Garg (MartinFowler.com) proposes treating AI instructions as infrastructure: versioned, reviewed, shared team artifacts covering standards, externalized decision context, and feedback loops. Markus Eisele’s framing: “intent as a first-class artifact.”

Sources: Tim O’Reilly, O’Reilly Radar, April 7, 2026; Rahul Garg, MartinFowler.com series “Encoding Team Standards” / “Context Anchoring” / “Feedback Flywheel”, March–April 2026; Markus Eisele, O’Reilly Radar, April 8, 2026

10. The individual-to-organization productivity gap is now measurable

The disconnect is no longer anecdotal. Microsoft Research (April 2026, 4 authors): enterprise users save 40–60 minutes/day, but employment for workers aged 22–25 in AI-exposed roles has declined 16% relative to less-exposed roles. The HBR piece by Korst, Puntoni, and Tambe (Wharton) documented that “most large organizations have crossed a threshold: AI moved from something they were considering to something they were officially committed to” — but the translation from individual productivity to business outcomes is still not happening. Workers using AI “can be perceived as less capable, even when their output is identical.”

Sources: Teevan, Butler, Hofman, Janssen, “New Future of Work: AI is driving rapid change, uneven benefits”, Microsoft Research, April 9, 2026; Korst, Puntoni, Tambe, HBR, April 8, 2026

So Where Does This Leave Us?

The discourse has caught up to the diagnosis. Every serious practitioner-focused source from the last three months agrees: tools have outpaced processes, individual gains are not translating to organizational throughput, and the bottleneck is now organizational design — not coding speed.

What is less common is a concrete prescription. Most sources are still diagnosing. The components of a solution exist in the discourse — governance infrastructure, intent-as-infrastructure, new roles, auto-merge lanes — but nobody has unified them into a complete delivery model.

That is still the work to be done.

The race is still on. And now we have the data to prove it.