AI Disclosure: This blog post was generated through a conversation with Claude Code. The research was AI-assisted. Here is the prompt that drove it:

“Research the top 3 AI-enabled software-development processes that are currently (last 3 months) discussed and suggested. Identify the top 3 AI-enabled full SDLC methodologies (end-to-end delivery frameworks, not just tools or single-step patterns) that are being actively discussed, proposed, or adopted in the software engineering community. For each: core idea, key proponents, adoption status, a ‘watch out’, and sources.”

The discourse has caught up to the diagnosis.

If you have been following the conversation about the Augmented Software-Engineering Organization (ASEO) — the idea that AI is not an evolution but a revolution requiring a fundamental redesign of how engineering organizations deliver software — the question that naturally follows is: what does the new process actually look like?

The answer, as of April 2026, is that three distinct methodologies have emerged from the noise. None of them is finished. None of them has a definitive playbook. But all three are concrete enough to evaluate, and one of them is mature enough to bet on.

Here they are.

1. Spec-Driven Development (SDD)

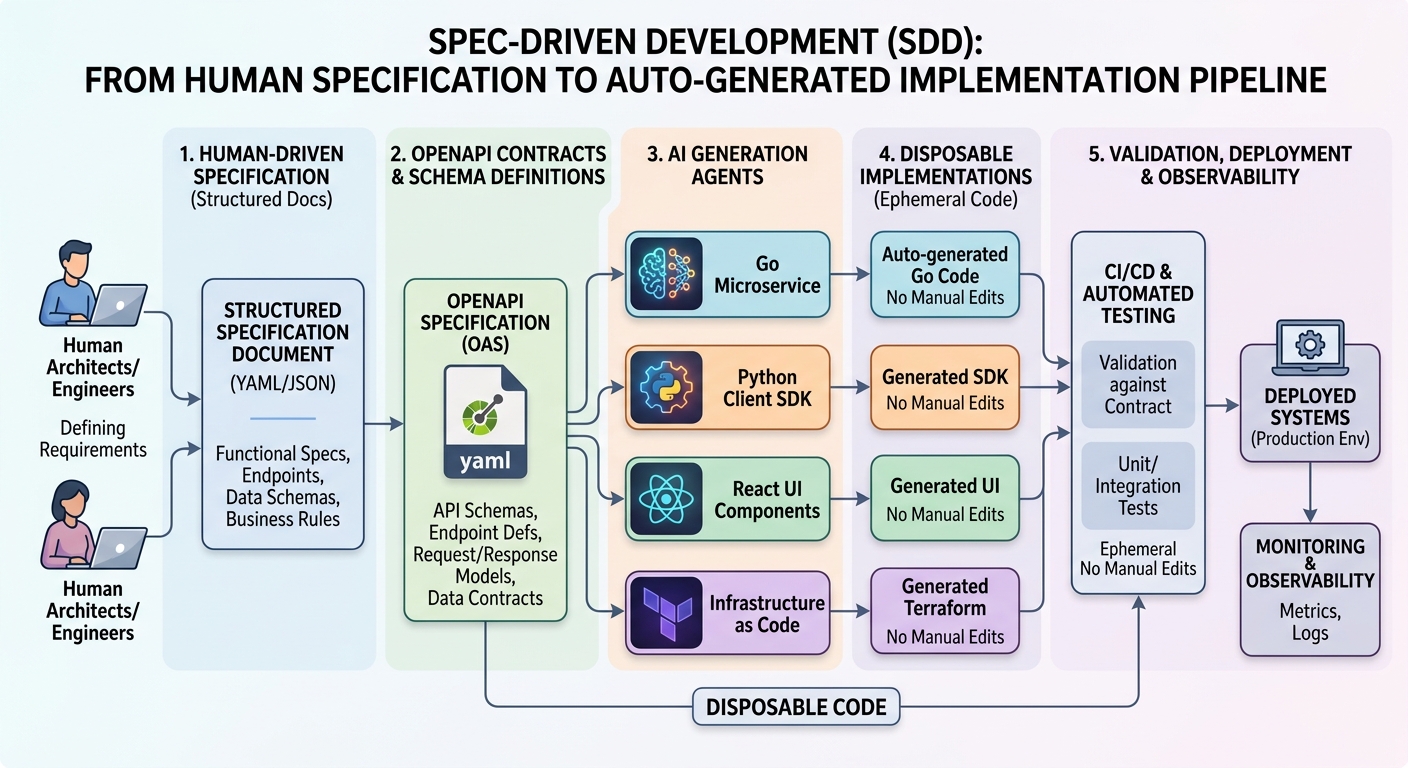

The most structurally radical of the three. Spec-Driven Development inverts the traditional SDLC by making the specification — not the code — the primary maintained artifact. Engineers write structured functional specs (using formats like EARS notation or OpenAPI contracts). AI agents then generate, regenerate, and validate code against those specs continuously. The code is explicitly disposable — never manually edited, always regenerable from an upstream spec.

The five-layer pipeline proposed by Griffin and Carroll (InfoQ, January 2026) runs from Specification → Generation → Artifact → Validation → Runtime, with the key principle that drift between spec and runtime is detected and flagged automatically. AWS’s Kiro IDE tool operationalizes this as: natural language → structured requirements → architecture doc → task list → agent-driven implementation. Tessl’s version is the most provocative: the spec replaces the codebase as the thing your team actually maintains.

The fundamental shift for engineering managers: your engineers stop being code authors and become contract authors. Architecture reviews become spec reviews. PRs become spec diffs.

Adoption status: Early and experimental. Kiro and GitHub’s spec-kit have active early adopters. The Thoughtworks Technology Radar Vol. 34 (April 2026) rates it “Assess” — worth piloting, not yet ready for broad production use.

Watch out: Thoughtworks delivers the sharpest critique directly: “teams may be relearning a bitter lesson — that handcrafting detailed rules for AI ultimately doesn’t scale.” Specs can become the new legacy: large, opaque, and owned by no one. The cognitive shift to contract-first reasoning is non-trivial, and proving that generated code is correct — not just specification-conformant — remains an open problem.

Sources: Leigh Griffin and Ray Carroll, “Spec Driven Development: When Architecture Becomes Executable,” InfoQ, January 12, 2026; Thoughtworks Technology Radar Vol. 34, April 2026; Markus Eisele, O’Reilly Radar, April 8, 2026

2. Harness Engineering

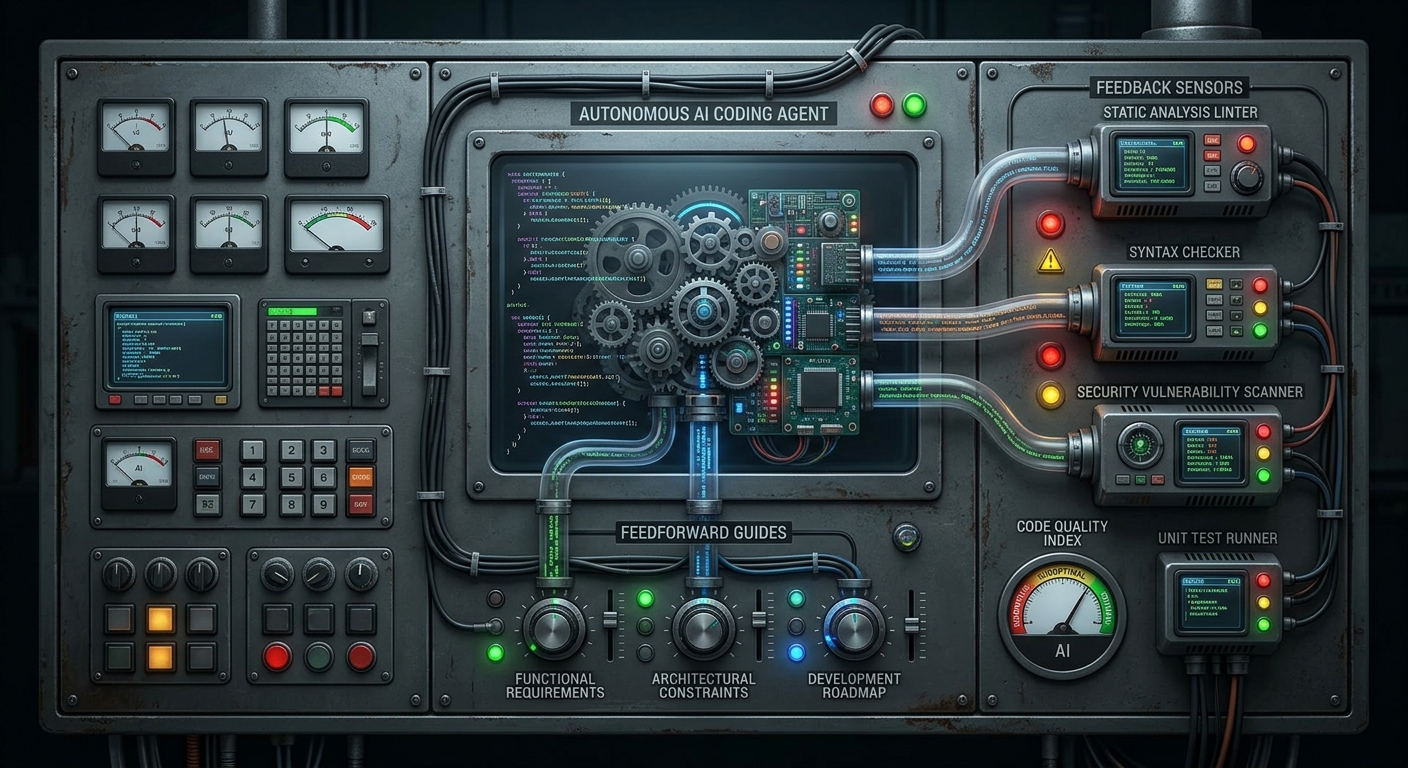

The most mature and most precisely specified of the three. Coined by Birgitta Böckeler at Thoughtworks (February 2026, published April 2026), Harness Engineering proposes that the central engineering discipline for AI-assisted teams is no longer writing code — it is designing the control system around the coding agent.

The harness is everything around the model itself: feedforward controls (guides that steer the agent before it acts) and feedback controls (sensors that detect problems after the agent acts and trigger self-correction). These operate across two axes:

- Timing: Feedforward (anticipatory) vs. Feedback (reactive)

- Execution: Computational (deterministic: linters, type checkers, test runners — fast and cheap) vs. Inferential (semantic: AI-powered reviewers — slower, capable of detecting meaning-level problems)

Three harness layers cover the full lifecycle: a Maintainability Harness (internal code quality), an Architecture Fitness Harness (structural conformance), and a Behaviour Harness (functional correctness). The timing model — keep quality left — maps directly to a delivery pipeline: fast computational sensors pre-commit, comprehensive AI reviews post-integration, SLO drift monitoring at runtime.

The fundamental shift for engineering managers: quality assurance stops being a gate and becomes a continuously active control system. The senior engineer’s job is to design, tune, and evaluate the harness — not to review 500-line diffs.

Adoption status: The most production-ready of the three. Thoughtworks rates “Feedback Sensors for Coding Agents” at Trial and “Curated Shared Instructions for Software Teams” at Adopt. Internal Thoughtworks client engagements have deployed this framework. It is the closest thing to a named, deployable methodology that currently exists.

Watch out: Böckeler names the gap directly: the Behaviour Harness — proving functional correctness — is largely unsolved. Computational sensors catch structural problems; neither computational nor inferential sensors reliably catch misdiagnosed requirements or over-engineered solutions. There is also a diagnostic paradox: if sensors never fire, is that evidence of quality or of inadequate detection? As harnesses grow, instruction coherence becomes a serious maintenance burden — what the Thoughtworks Radar separately labels “Agent Instruction Bloat” (Hold).

Sources: Birgitta Böckeler, “Harness Engineering for Coding Agent Users,” martinfowler.com, April 2, 2026; Rahul Garg, “Feedback Flywheel” and “Context Anchoring,” martinfowler.com, March–April 2026; Thoughtworks Technology Radar Vol. 34, April 2026

3. Agent-Driven Development (ADD)

The most widely practiced but least governed of the three. Agent-Driven Development designates AI coding agents as the primary contributors to software, with human engineers shifting roles from implementers to strategic planners, architects, and final reviewers.

The canonical ADD workflow (Tyler McGoffin, GitHub Copilot Applied Science, March 2026):

- Plan — conversational, verbose scoping with the agent in planning mode (human-led)

- Implement — agent executes autonomously; human observes

- Agent Review — a dedicated review agent inspects output against quality criteria

- Human Oversight — final human review enforcing architectural patterns and business intent

- Maintenance Loop — periodic automated reviews for duplication, coverage, and documentation gaps

DHH at 37signals runs two models simultaneously (a fast model for iteration, a powerful model for complex reasoning) and applies the same quality bar as pre-AI work — the point is not to lower standards but to reach them faster. Amazon enforces a governance layer: junior engineers may not merge agent-generated code without senior sign-off.

The fundamental shift for engineering managers: code authorship is no longer the bottleneck. Clean architecture, comprehensive tests, and thorough documentation become prerequisites for agent effectiveness rather than nice-to-haves that get cut under deadline pressure. This inverts traditional prioritization entirely.

Adoption status: Actively practiced at scale. 37signals, Amazon, Meta, Uber, Sentry, and Stripe (reportedly thousands of agent-generated PRs per week) are all cited in The Pragmatic Engineer’s March and April 2026 coverage. Adoption is ahead of methodology — teams are doing it before any standard playbook exists.

Watch out: The Pragmatic Engineer’s March 2026 investigation (“Are AI Agents Actually Slowing Us Down?”) provides the counter-evidence: more pull requests do not correlate with business impact. Amazon’s 13-hour AWS outage was traced in part to AI-assisted changes. The core failure mode is what Thoughtworks labels “Codebase Cognitive Debt” (Hold): speed metrics look good while human understanding of the system quietly degrades. ADD assumes human review can scale with agent output. The evidence so far suggests it cannot — without structural changes, the review bottleneck becomes the new constraint.

Sources: Tyler McGoffin, “Agent-Driven Development in Copilot Applied Science,” GitHub Blog, March 31, 2026; Gergely Orosz, “Are AI Agents Actually Slowing Us Down?” and “DHH’s New Way of Writing Code,” The Pragmatic Engineer, March–April 2026; Thoughtworks Technology Radar Vol. 34, April 2026

Side by Side

| SDD | Harness Engineering | ADD | |

|---|---|---|---|

| Human role | Contract author | Control system designer | Planner + final reviewer |

| Where judgment lives | Spec-writing time | Harness-design time | Review time |

| Code status | Disposable artifact | Agent output under control | Primary deliverable |

| Maturity | Experimental | Production-ready (partial) | Widely practiced, ungoverned |

| Main failure mode | Spec becomes new legacy | Behaviour harness gap | Review bottleneck |

| Best fit | Greenfield, API-heavy systems | Teams with existing CI/CD discipline | Orgs already running agents at scale |

The Pattern Underneath

All three methodologies are solving the same problem from different angles: agent output is outpacing human judgment.

SDD moves judgment left — to the specification. Harness Engineering moves judgment into the control plane — to sensor and guide design. ADD moves judgment to the right — to the review and oversight layer. The failure mode is identical across all three: the methodology breaks when the human judgment layer is underpowered relative to the volume of agent output passing through it.

This is not a tool problem. It is an organizational design problem. The question for engineering managers in 2026 is not “which AI tools should we adopt?” It is: “where in our delivery pipeline do we have enough judgment capacity to actually govern what the agents produce?”

Where to Start

If your organization has strong CI/CD discipline and an existing investment in automated quality gates, Harness Engineering is the most actionable starting point. It builds on what you already have, introduces control incrementally, and has the most production evidence behind it. It is also the only one of the three with a named, published framework you can hand to a team lead on Monday morning.

SDD is the most transformative long-term bet — but it requires a level of specification discipline most organizations do not yet have. ADD is already happening whether you planned it or not; the question is whether you govern it before the cognitive debt compounds.

The race is still on. But now we have frameworks to run it with.